NETWORKING Fiber Optic Cables Structured Cabling

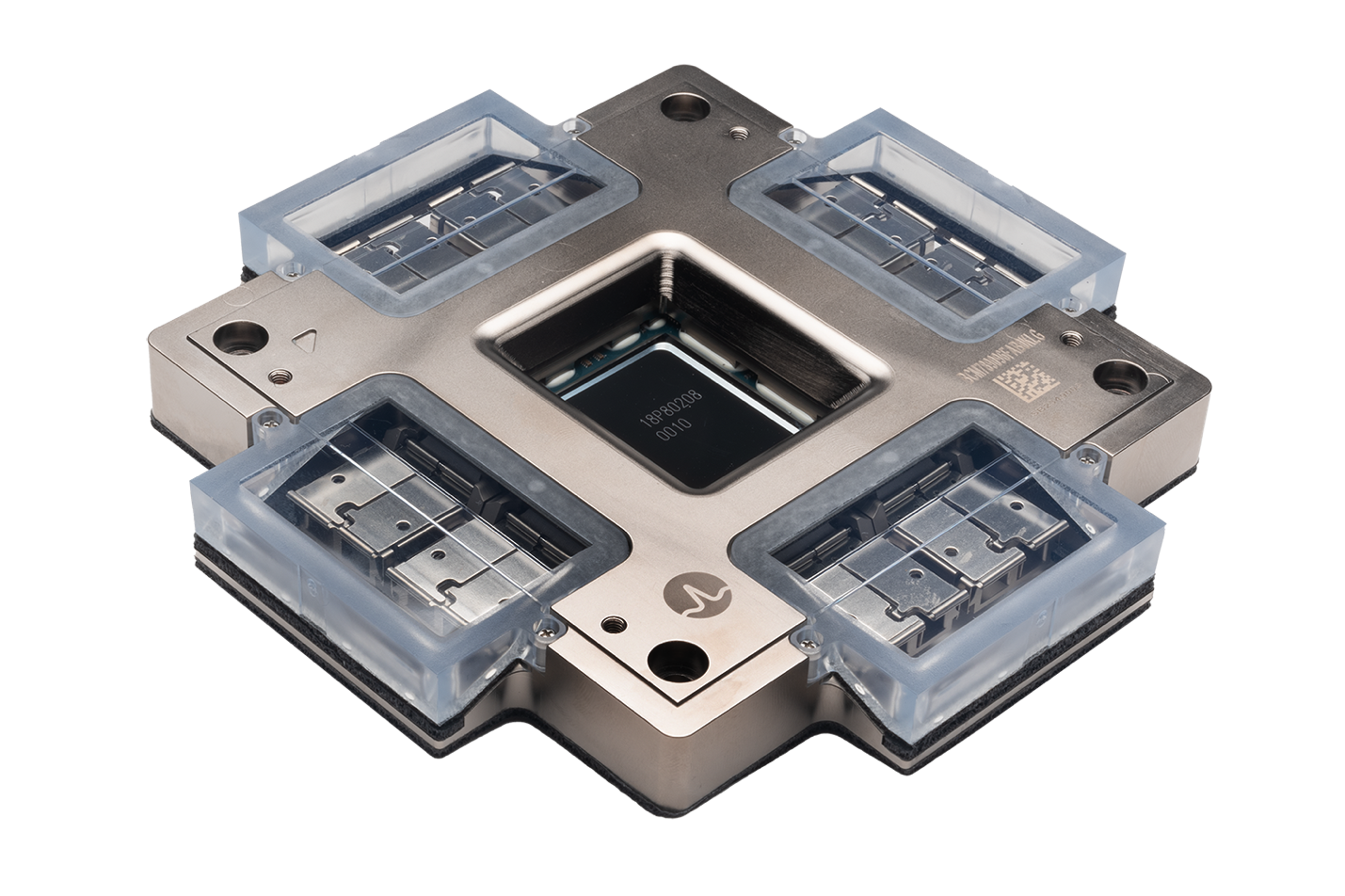

Co-Packaged Optics – End of Pluggables? What It Is, Why It Matters, and Who Needs to Pay Attention

Introduction: A New Chapter in Optical Connectivity

Low-Loss Fiber Connectivity for AI

Low-loss fiber connectivity is essential for modern AI data centers due to its ability to address...

Smart Cabling Organization Can Save Your Project

Starting a data center hardware upgrade is a significant undertaking, but if cabling has been left...

data center Structured Cabling infrastructure

Reference Guide for Applicable Data Center Structured Cabling Standards Organizations

In the rapidly evolving world of data centers, structured cabling standards play a crucial role in...

data center Fiber Cables IT infrastructures

How Undersea Fiber Cables Power the Global Internet: The Internet’s Arteries

The internet may feel like an invisible, instant force connecting the world, but behind it lies a...

How Undersea Fiber Cables Power the Global Internet: The Internet’s Arteries

The internet may feel like an invisible, instant force connecting the world, but behind it lies a...

data center Cabling Fiber Optic Cables

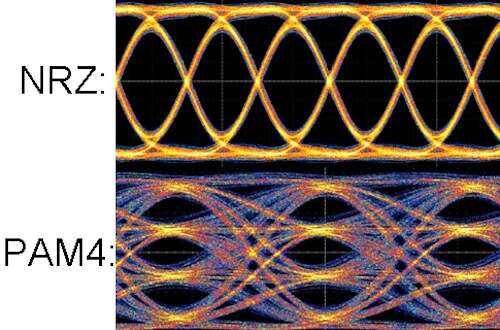

PAM4, the Superpower Boosting Your Data Center to Light Speed!

In the fast-paced world of data transmission, the demand for higher speeds and greater efficiency...

IT Equipment NETWORKING Hardware Security

Protect Your Hardware in Shared Spaces: Avoiding Network Disasters

When your network hardware is housed in a shared space, such as a wiring closet, co-location...

data center Sustainability Networking Cables

Streamlining Data Center Cabling Projects with Efficient Packaging and Expert Support

Optimizing Logistics for Efficient Fiber Trunk Installation in a Hyperscale High Performance Computing Environment

In the high-octane world of high-performance computing (HPC), a seamless and reliable network...

Recent Posts

Introduction: A New Chapter in Optical Connectivit

Low-loss fiber connectivity is essential for...

Starting a data center hardware upgrade is a...

Posts by Tag

- data center (12)

- Fiber Optic Cables (11)

- Cabling (7)

- NETWORKING (6)

- Structured Cabling (6)

- Fiber Optic Cabling (5)

- Fiber Cable (4)

- Networking Cables (4)

- Fiber Cables (3)

- Hardware Security (2)

- IT Infrastructure (2)

- Port Replication (2)

- Sustainability (2)

- AI (1)

- Brocade (1)

- Carbon Offsetting (1)

- Data Security (1)

- FCOE Works (1)

- ICLs (1)

- IT Equipment (1)

- IT Network (1)

- IT infrastructures (1)

- POE (1)

- Switches (1)

- Tapped Holes (1)

- data centers (1)

- hardware (1)

- infrastructure (1)

- storage (1)

- strategy (1)

Popular Posts

Why does the gauge matter in my network’s racks?...

High dB loss in fiber optic cabling...

Let’s look at the construction of fiber optic...